|

| John Wanamaker, patron saint of marketing measurement. |

But as I gathered answers from the two dozen vendors who will be included the CDP Institute’s comparison report, I found that at best one or two provide the type of attribution I had in mind. This wasn't enough to include in the screening list. But there was an impressive variety of alternative answers to the question. Those are worth a look.

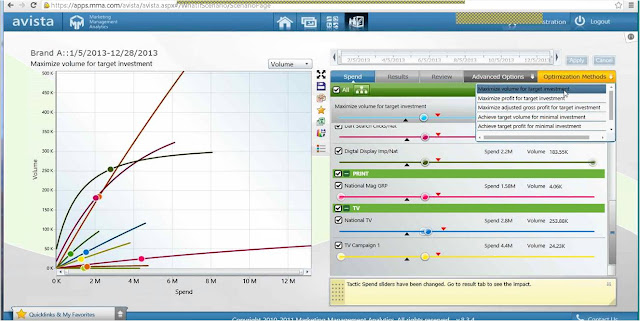

- Marketing mix models. This is the attribution approach I originally intended to cover. It gathers all the marketing touches that reach a customer, including email messages, Web site views, display ad impressions, search marketing headlines, and whatever else can be captured and tied to an individual. Statistical algorithms then look at customers who had a similar set of contacts except for one item and attribute any difference in performance to that. In practice, this is much more complicated than it sounds because the system needs to deal with different levels of detail and intelligently combine cases that lack enough data to treat separately. The result is an estimate of the average value generated by incremental spending in each channel. These results are sometimes combined with estimates created using different techniques to cover channels that can’t be tied to individuals, such as broadcast TV. The estimates are used to find the optimal budget allocation across all channels, a.k.a. the marketing mix.

- Next best action and bidding models. These also estimate the impact of a specific marketing message on results, but work at the individual rather than channel levels. The system uses a history of marketing messages and results to predict the change in revenue (or other target behavior) that will result from sending a particular message to a particular individual. One typical use is deciding how much to bid for a display ad impression; another is to choose products or offers to make during an interaction. They differ from incremental attribution because they create separate predictions for each individual based on their history and the current context. Several CDP systems offer this type of analysis. But it’s ultimately not different enough from other predictive analytics to treat it as a distinct specialty.

- First/last/fractional touch. These methods use the individual-level data about marketing contacts and results, but apply fixed rules to allocate credit. They are usually limited to online advertising channels. The simplest rules are to attribute all results to either the first or last interaction with a buyer. Fractional methods divide the credit among several touches but use predefined rules to do the allocation rather than weights derived from actual data. These methods are widely regarded as inadequate but are by far the most commonly used because alternatives are so much more difficult. Several CDPs offer these methods.

- Campaign analysis. This looks at the impact of a particular marketing campaign on results. Again, the fundamental method is to compare performance of individuals who received a particular treatment with those who didn’t. But there’s usually more of an effort to ensure the treated and non-treated groups are comparable, either by setting up a/b test splits in advance or by analyzing results for different segments after the fact. The primary unit of analysis here is the campaign audience, not the specific individuals. The goal is usually to compare results for campaigns in the same channel, not to compare efforts across channels. This is a relatively simple type of analysis to deliver since it doesn’t required advanced statistics or predictive techniques. As a result, it’s fairly common or could be delivered by many systems even without the vendor creating special features to do it.

- Content performance analysis. This is very similar to campaign analysis except that audiences are defined as people who received a particular piece of content, which could be used across several campaigns. Again, there might be formal split tests or more casual comparison of results. Some implementation draw broader conclusions from the data by grouping content with similar characteristics such as product, message, or offer. But unless the groups are identified using artificial intelligence, even this doesn’t add much technical complexity.

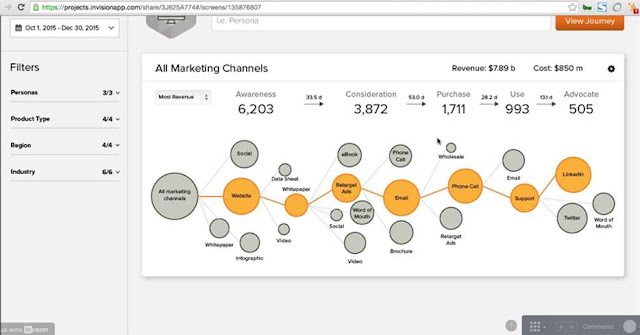

- Journey analysis. Truth be told, no vendor in my survey described journey analysis as a type of incremental attribution. But it does come up in some discussions of marketing measurement and optimization. Like marketing mix and next best action methods, journey analysis examines individual-level interactions to find larger patterns and to identify optimal choices for reaching specified goals. But it looks much more closely at the sequence of events, which requires different technical approaches to deal with the higher resulting complexity.

Marketing measurement is one of the primary uses of Customer Data Platforms. Dropping attribution from the list of CDP screening questions shouldn't be interpreted to suggest it’s unimportant. It just means it’s that measurement is too complicated to embed in a simple screening question. As with other important CDP features, buyers who want their CDP to support marketing measurement will need to define their specific needs in detail and then closely examine individual CDP vendors to see who can meet them.