Despite the visibility of these factors, the uninterrupted growth of martech has been accompanied almost from the start by predictions it will soon stop. This based less on any specific analysis than a fundamental sense that what goes up must come down, which is generally a safe bet. There’s also an almost esthetic judgement that a market so large is just too unruly, and that the growing number of systems used by each marketing department must indicate substantial waste and lost control.

One common metric cited as evidence for excess martech is utilization rate: Gartner, the high priests of rational tech management, reported last year that martech utilitzation rates fell from 58% in 2020 to 42% in 2022 and ranted in a recent press release that “The willingness to let the majority of their martech stack sit idle signifies a fundamental resource disconnect for CMOs. It’s difficult to imagine them leaving the same millions of dollars on the table for agencies or in-house resources. This trade-off of technology over people will not help marketing leaders accelerate out of the challenges a recession will bring.” They were especially incensed that their data showed CMOs are increasing martech’s share of the marketing budget, comparing them to “gamblers looking to write-off their losses with the next bet.” (They probably meant “recoup”, not “write-off” their losses.)

This isn’t just a Gartner obsession. Reports from Integrate, Wildfire, and Ascend2 also cite low utilization rates as evidence of martech overspending.

It aint necessarily so.

For one thing, underutilization is common in all departments, not just marketing. Nexthink found half of all SaaS licenses are unused. Zylo put the average company-wide utilization at 56% and Productiv put it at 45%. (These studies measure app usage, not feature usage. But you can safely bet that feature usage rates are similarly low through the organization.)

More fundamentally, there’s no reason to expect people to use all the features of the products they buy. What fraction of Excel or Powerpoint features do you use? What’s important is finding a system with the features you need; if it has other features you don’t need, that’s really okay so long as you’re not paying extra or finding they get in your way. Software vendors routinely add features required by a subset of their users. Since that helps them serve more needs for more clients, it’s a benefit, not a problem.

The real problem isn’t presence of features you don’t need, but the absence of features you do. That’s what pushes companies to buy new systems to fill in the gaps. As mentioned earlier, the great and on-going growth of the martech industry is due in good part to new technologies and channels creating new needs which existing systems don’t fill. That said, it's true that some purchases are unnecessary: buyers don’t always realize that a system they own offers a capability they need. And, since vendors add new features all the time, a specialist system may become redundant if the same features are added to a company’s primary system.

In both of those situations, avoiding unnecessary expense depends on marketers keeping themselves informed about what their current systems can do. This is certainly a problem: thanks to the miracle of SaaS, it’s often easier to buy a new system than the fully research the features of systems already in place. (Installing and integrating the new system will probably be harder, but that comes later.) So we do see reports of marketers trying to prune unnecessary systems from their stacks: for example, the previously-cited Integrate report found that 26% of marketers expected to shrink their stacks. Similarly, the CMO Council found 25% were planning to cut martech spend and Nielsen said 24% were planning martech reductions. (Before you sell all your martech stock, you should also know that each report found even more marketers were planning to increase their martech budgets: 32% for Integrate, 36% for CMO Council, and 56% for Nielsen.)

Let’s assume, if only for argument’s sake, that marketers are reasonably diligent about buying only products that close true gaps. New gaps will continue to appear so long as innovation continues, and there’s no reason to expect innovation will stop. So can we expect the growth in martech products will also continue indefinitely?

Until recently I would have said yes (unless budgets are severely crimped by recession). But the latest round of AI-based tools has me reconsidering.

Specifically, I wonder whether marketers will close gaps by building their own applications with AI instead of buying applications from someone else. If so, industry expansion could halt.

Marketers have been using no-code technologies to build their own applications for some time. Some of these may have depressed demand for new martech products but I don’t think the impact has been substantial. That’s because no-code tools are usually constrained in some way: they’re an interface on top of an existing application (drag-and-drop journey builders), a personal productivity tool (Excel), or limited to a single function (Zapier for workflow automation). Building a complete application with such limited tools is either impossible or not worth the trouble.

The latest AI systems change this. Chat-based interfaces let users develop an application by describing what they want a system to do. This enables the resulting system to perform a much broader set of tasks than a drag-and-drop no-code interface. That said, the actual capabilities depend on what the model is trained to do. Today, it still takes considerable technical knowledge to include the right technical details in the instructions and to refine the result. But the AIs will quickly get better at working out those details for themselves, drawing on larger and more sophisticated information about how things should be done. Microsoft’s latest description of copilots and plug-ins points in this direction: “customers can use conversational language to create dataflows and data pipelines, generate code and entire functions, build machine learning models or visualize results.”

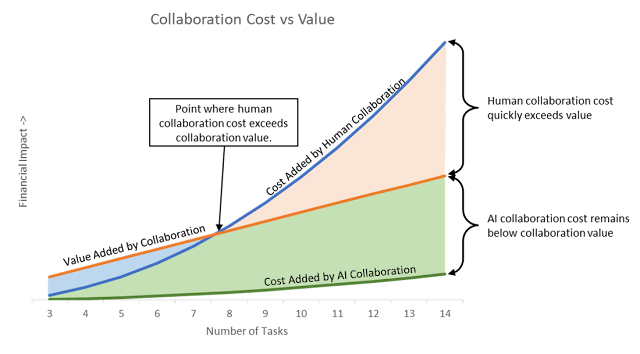

What’s important is that the conversational interface will drive a system that automatically employs professional-grade practices to ensure the resulting application is properly engineered, tested, deployed, and documented. In effect, it pairs the business user with a really smart developer who will properly execute what the user describes, let the user examine the results, and tweak the product until it meets her goals. Human developers who can do this are rare and expensive. AI-based developers who can do this should soon be common and almost free.

This change overcomes the fundamental limitation of most user-built apps: they can only be deployed and maintained by the person who built them and are almost guaranteed to violate quality, security and privacy standards. This issue – let’s call it governance – has almost entirely blocked deployment of user-built systems as enterprise applications. Chat-built systems remove that barrier while fundamentally altering the economics of system development. More concretely: building becomes cheaper so buying becomes less desirable. This could significantly reduce the market for purchased software.

Anyone who has ever been involved in an enterprise development project will immediately recognize the flaw in this argument: there’s never just one user and most development time is actually spent getting the multiple stakeholders to agree on what the system should do. Agile methodologies mitigate these issues but don’t entirely eliminate them.

Whether chat-driven development can overcome this barrier isn’t clear. It will certainly speed some things up, which might enable teams to build and compare alternative versions when trying to reach a decision. But it might also enable independent users to create their own versions of an application, which would probably lead to even stiffer resistance to adopting someone else’s approach.

One definite benefit should be that chat-based applications will learn to explain how they function in terms that humans can understand. Responsible tech managers will insist on this before they deploy systems those applications create.

In any event, I do believe that chat-built systems will make home-built software a viable alternative to packaged systems in a growing number of situations. This will especially apply to systems that fill small, specialized gaps created as new marketing technologies develop. Since filling these gaps has been a major factors behind the continuous growth of martech, industry growth may slow as a result.

Incidentally, we need a name for chat-based development. I’ll nominate “vo-code”, as shorthand for voice-based coding, since it fits in nicely with pro-code, low-code, and no-code. I could be talked into “robo-code” for the same reason.