Still, preparing the slides gave me a chance to scan the surveys in my archives, which was entertaining in its own little way. Many surveys ask similar questions, which gave me some choices during my preparation. But I didn’t look carefully at how they compare.

Today I’ll do that. I’ve chosen one of the most popular questions: what are the barriers to marketing technology adoption? I have versions of this from seven different surveys within the past year.

Of course, each survey uses different terms. To make the comparison, I collapsed the various answers into a few reasonably-distinct categories, committing a certain amount of shoe-horning along the way. I then recorded where each answer ranked in each survey, compiled the results, and did a crude ranking with a combination of mathematical wizardly and body english. (Multiple answers for the same survey indicate I placed several questions into the same category.)

Results are below. I've shaded the first ranked answers in orange and the second and third ranked answers in yellow.

My first observation was the sheer inconsistency of the answers. Budget issues emerged as a clear number one, but they reached that rank on just four of the seven surveys and ranked quite low on the other two that included them. The second-ranked item (marketing process) was never listed first; it ended where it did because it had the most twos and threes. No other item was ranked first more than once or in the top three more than twice.

Things made a bit more sense when I looked at the survey audiences. Winterberry and Forrester were specifically about online marketing, Gleanster and Marketing Sherpa were B2B surveys, and IBM and the two CMO Council studies were of general marketers. Since most B2B marketing is also online, it makes sense to look at the first four as one group and the other three as another.

Now we see some interesting consistencies:

• Budget isn’t much of an issue for the online and B2B marketers, but dominant for the mixed marketers.

• Marketing process and marketing staff skills are major concerns for online and B2B but rarely mentioned by the mixed marketers.

• Senior management support, and to a lesser extent IT support and technology capabilities, are significant barriers for mixed marketers but don’t slow down the online and B2B groups.

• Metrics, organizational silos, and the economy are cited occasionally by both groups but don’t seem to be major issues for either.

So there’s a fairly coherent picture after all.

• Online and B2B marketers are struggling to keep up with a rapidly changing marketplace, meaning their biggest problems are people and process. The importance of their work is obvious enough that budgets and senior management support are generally available. They have the technical savvy and independence to avoid issues with IT support and organizational silos.

• Mixed marketers, working in traditional channels, still struggle with budgets, metrics, and senior management. They have mature marketing organizations, so process and skills are in place, at least for traditional programs. They do struggle more with IT, technology, and organizational silos, because they lack their own technical skills and have limited clout in the organization.

• Everybody says they care about metrics but it's rarely a top priority.

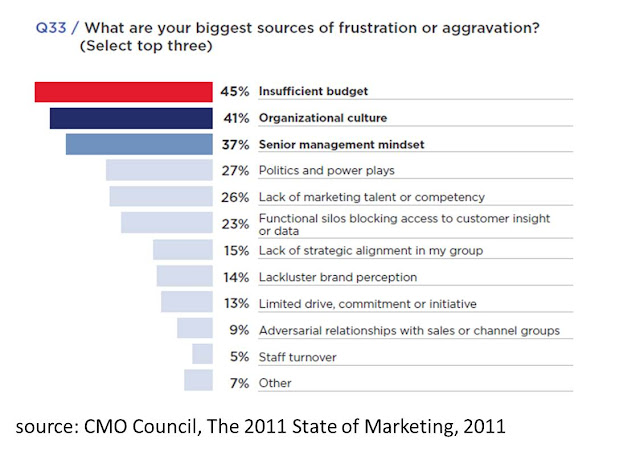

Or at least that’s my take. I’ve displayed the actual surveys below – if you reach other conclusions or spot any other patterns, let me know.